Deep dive · ICCV 2025 · 2025

PRISM: Debiasing Vision-Language Models via LLM-Guided Embedding Projection

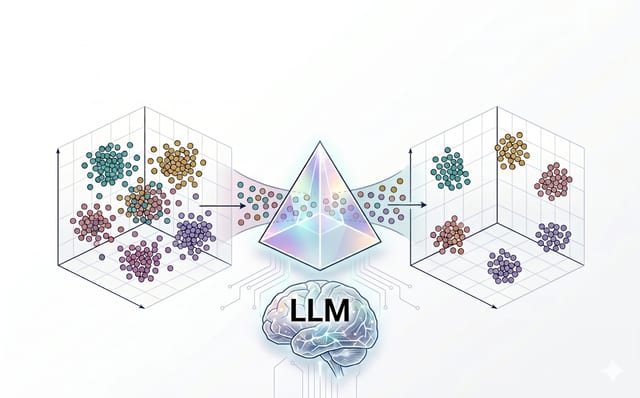

A data-free, task-agnostic debiasing framework for CLIP-style vision-language models.

Problem

Vision-language models such as CLIP encode strong implicit biases that surface as spurious correlations on downstream tasks (e.g. background, gender, demographic shortcuts). Existing debiasing methods either need labeled bias attributes, paired counterfactual data, or task-specific finetuning that is expensive and brittle.

Approach

PRISM is a two-stage data-free debiasing recipe. First, an LLM is prompted with simple class names to generate bias-aware scene descriptions that span plausible spurious factors. Second, a small linear projection is learned on top of the frozen CLIP embedding space using a Latent-space Debiasing loss that simultaneously enforces (i) intra-class invariance to spurious shifts and (ii) inter-class separability of the original semantic content. No new images, no labels, no per-task tuning.

Key results

- Improves worst-group accuracy on Waterbirds and CelebA by margins competitive with methods that require group labels.

- Generalizes across CLIP backbones (ViT-B/16, ViT-L/14) and downstream classifiers without retraining the encoder.

- Costs minutes of compute per task: only the projection matrix is fit, the encoder stays frozen.

Takeaways

- Spurious-attribute supervision is not necessary if you can sample bias-aware text descriptions from an LLM.

- Linear interventions in the CLIP latent space remain a surprisingly strong and inspectable baseline.

- Task-agnostic debiasing is feasible: one projection serves several downstream classifiers.