Deep dive · AAAI 2025 · 2025

FedGaLA: Federated Unsupervised Domain Generalization via Gradient Alignment

First framework for unsupervised federated domain generalization, grounded in a gradient-alignment theory.

Problem

Federated learning clients see disjoint, unlabeled distributions. Existing federated domain-generalization work assumes labels and a centralized validation domain — an unrealistic premise for privacy-preserving deployments where labels live on-device and target domains are unseen.

Approach

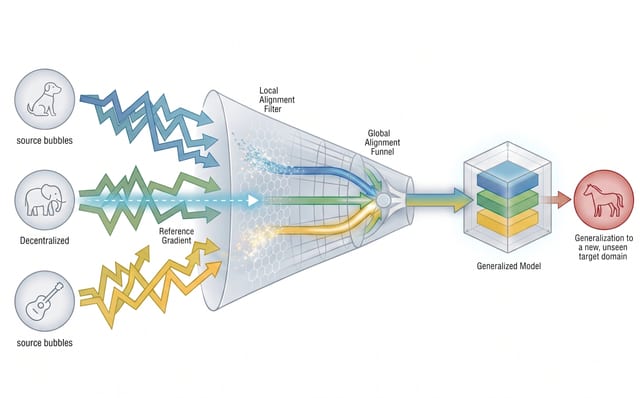

We formalize the unsupervised federated domain generalization (UFDG) setting and prove a generalization bound that decomposes the target risk into (i) local self-supervised loss, (ii) intra-client gradient agreement, and (iii) cross-client gradient agreement. FedGaLA operationalizes this bound with two complementary alignment terms: a Local Alignment regularizer that aligns per-batch gradients on each client, and a Global Alignment step at the server that aligns aggregated gradients across clients before applying the update.

Key results

- State of the art on PACS, OfficeHome, VLCS and TerraIncognita under the new UFDG protocol.

- Robust to client count and heterogeneity; the alignment terms degrade gracefully when clients are scarce.

- Theory predicts the empirical ranking of variants — local alignment alone, global alignment alone, or both — across benchmarks.

Takeaways

- Gradient alignment is a sufficient learning signal even without labels in federated settings.

- A single principle (alignment) cleanly explains both client-side and server-side regularizers.

- Privacy-preserving generalization does not require synthetic data or extra communication rounds.